Introduction

CXL 3.0 : Compute Express Link (CXL) is a high-speed interconnect system created to facilitate effective communication between CPUs and accelerators, memory devices, and other high-performance peripherals.

It was created by a group of top business players, including Intel, Microsoft, and other well-known software firms.

In comparison to conventional interconnect technologies like PCIe, CXL has several advantages, including increased speed, decreased latency, and lower power consumption.

CXL is currently with the generations of CXL 1.1, CXL 2.0 and CXL 3.0. Specs have been announced.

Here, we are going to see about CXL 3.0. The publication of the CXL 3.0 specification has been announced by the CXL Consortium to give data centers both now and in the future greater degrees of flexibility and composability.

Built on the previous technology, CXL 3.0 offers many cutting-edge features and advantages, such as tripling bandwidth at the same latency.

How does CXL work?

Based on PCIe lanes, the CXL interconnect standard was created. It provides a conduit for communication between the CPU and RAM.

CXL, a PCIe 5.0-based device with electrical components, has a data transmission rate of 32 billion bytes per second or up to 128 GB/s using 16 lanes.

The memory on connected devices and the CPU are maintained in sync.

Compute Express Link Interconnect

In reaction to the exponential growth of data, the semiconductor industry is implementing a revolutionary architectural shift that will profoundly alter the performance, efficiency, and cost of data centers.

Server architecture, which has largely remained unchanged for decades, is now making a revolutionary leap to managing the yottabytes of data generated by AI/ML applications.

The idea that each server has a specialized processor, memory, networking hardware, and accelerators is being replaced by a disaggregated “pooling” paradigm that intelligently matches resources and workloads.

Compute Express Link Protocols & Standards

The Compute Express Link (CXL) standard supports a variety of use cases via three protocols: CXL.io, CXL.cache, and CXL.memory.

CXL.io: This protocol uses PCIe’s widespread industry acceptance and familiarity and is functionally equivalent to the PCIe 5.0 protocol. CXL.io, the fundamental communication protocol, is adaptable and covers a variety of use cases.

CXL.cache: Accelerators can efficiently access and cache host memory using this protocol, which was created for more specialized applications to achieve optimal performance.

CXL.memory: Using load/store commands, this protocol enables a host, such as a CPU, to access device-attached memory.

Together, these three protocols make it possible for computer components, such as a CPU host and an AI accelerator, to share memory resources coherently.

In essence, this facilitates communication through shared memory, which simplifies programming.

While CXL.io has its link and transaction layer, CXL.cache and CXL.mem are combined and share a common link and transaction layer.

What is CXL 3.0

The most recent and sophisticated CXL method is CXL 3.0. It was developed in response to the rising desire for a treatment that works better and lasts longer.

CXL 3.0 builds on the success of earlier iterations by employing a fresh, enhanced method that produces better outcomes faster.

The new procedure is the best choice for people who wish to restore their vision without having to spend months recovering from surgery because it is easier, faster, and more accurate.

Peer-to-peer direct memory access is introduced in CXL 3.0, and memory pooling is improved so that several hosts can cooperatively share a memory space on a CXL 3.0 device.

New usage models and more flexibility in data center architectures are made possible by these qualities.

Highlights of the CXL 3.0 specification:

- Fabric capabilities

- Multi-head and Fabric Attached Devices

- Enhanced fabric management

- Composable disaggregated infrastructure

- Better scalability and improved resource utilization

- Enhanced memory pooling

- Multi-level switching

- New enhanced coherency capabilities

- Improved software capabilities

- Double the bandwidth to 64 GTs

- Zero added latency over CXL 2.0

- Full backward compatibility with CXL 2.0, CXL 1.1, and CXL 1.0

Features of CXL 3.0

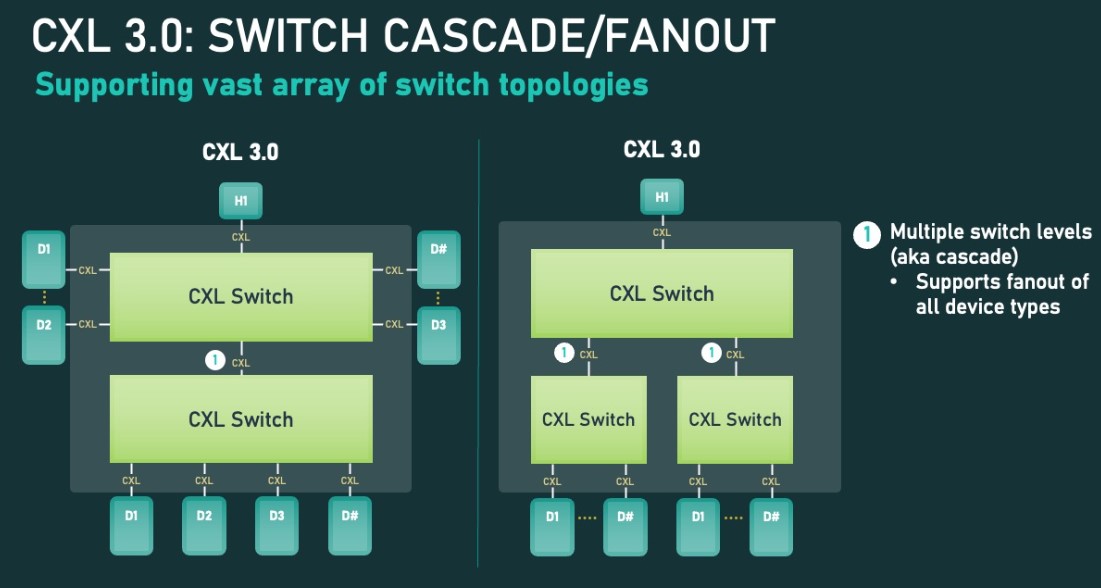

CXL 3.0 switch and fanout capability

The new CXL switch and fanout functionality are one of CXL 3.0’s key features. Switching was added to CXL 2.0, enabling numerous hosts and devices to be on a single level of CXL switches.

The CXL topology can now support many switch layers thanks to CXL 3.0. More devices can be added, and each Enterprise and Datacenter Standard Form Factor (EDSFF) shelf can have a CXL switch in addition to a top-of-rack CXL switch that connects hosts.

CXL 3.0 device-to-device communications

P2P devices are also added to device communications by CXL. P2P enables direct communication between devices without the requirement for a host. P2P-capable systems operate more effectively and deliver better results.

CXL 3.0 Coherent Memory Sharing

With CXL 3.0, coherent memory sharing is now supported. This is significant because it enables systems to share memory in ways other than CXL 2.0, which only allows for the partitioning of memory devices among various hosts and accelerators. In contrast, CXL permits all hosts in the coherency domain to share memory.

As a result, memory is used more effectively. Consider a situation where numerous hosts or accelerators can access the same data source. Coherently sharing that memory is a considerably more difficult task, but it also promotes efficiency.

CXL 3.0: Multiple Devices of all types per root port

The prior restrictions on the number of Type-1/Type-2 devices that might be connected downstream of a single CXL root port are eliminated in CXL 3.0.

CXL 3.0 completely removes those restrictions, whereas CXL 2.0 only permitted one of these processing devices to be present downstream of a root port. Depending on the objectives of the system builder, a CXL root port can now enable a full mix-and-match setup of Type-1/2/3 devices.

This notably entails increasing density (more accelerators per host) and the utility of the new peer-to-peer transfer features by enabling the attachment of several accelerators to a single switch.

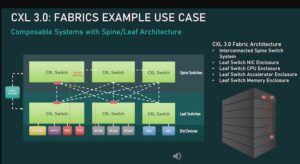

CXL 3.0: Fabrics Example

This versatility allows for non-tree topologies like rings, meshes, and other fabric structures even with only two layers of switches. There are no constraints on the types of individual nodes, and they can be hosts or devices.

CXL 3.0: Fabrics example Use cases

CXL 3.0 can even handle spine/leaf designs, where traffic is routed through top-level spine nodes whose sole purpose is to further route traffic back to lower-level (leaf) nodes that in turn contain actual hosts/devices, allowing truly unusual configurations.

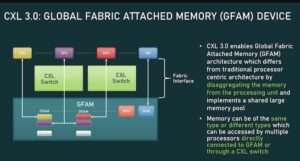

CXL 3.0: Global Fabric Attached Memory

Finally, what the CXL Consortium refers to as Global Fabric Attached Memory enables the usage of this new memory, topology, and fabric features (GFAM).

In a nutshell, GFAM advances CXL’s memory expansion board (Type-3) concept by further decomposing memory from a specific host. In that sense, a GFAM device is essentially its shared memory pool that hosts and other devices can access as needed.

Additionally, both volatile and non-volatile memory, such as DRAM and flash memory, can be combined in a GFAM device.

Advantages of CXL 3.0

Over other PCIe technologies, CXL 3.0 has several advantages. Direct memory access across peers is among the most intriguing characteristics of P2P DMA.

With the help of this functionality, many hosts can share the same memory space and resources. As a result, the use of model flexibility and scalability may also improve, along with performance.

Support for faster speeds and increased power efficiency, which results in better resource use and improved performance, is another advantage of CXL 3.0.

Additionally, CXL 3.0 makes it possible for devices to interact more quickly with one another, which may boost system throughput.

Lastly, CXL 3.0 features enhanced link training capabilities that enable quicker detection and connecting of various system components. This implies that connecting devices is now faster and more effective than before!

How does CXL 3.0 work?

The most recent CXL standard, CXL 3.0, offers several upgrades over earlier iterations. Most significantly, a new mode called Hybrid Mode that combines the best elements of batch and real-time modes has been introduced.

Other improvements included in CXL 3.0 include support for larger datasets, enhanced performance, and more.

How LFT can help with CXL

LFT helps CXL in various ways, some of which are listed below:

- LFT has a proven track record on PCIe/CXL Physical Layer and thus, can provide support in the Physical layer.

- LFT can provide support in architecting the various solutions of CXL.

- LFT will release a CXL IP during the first quarter of 2023.

Conclusion

CXL 3.0 is a ground-breaking e-commerce platform that offers a wide range of features to help you improve your online store. It makes it simple to build a potent website or optimize an existing one, even for individuals with little experience. CXL 3.0 presents growth potential and ought to be taken into account if you’re trying to build your company.

![Advanced Driver Assistance System [ADAS] Everything You Needs to Know](https://www.logic-fruit.com/wp-content/uploads/2022/10/Advanced-driver-assistance-systems-Thumbnail.jpg)